Time is Money, Part 5: Validating the Fix

- Feb 20, 2019

- Guest Author

This is part five in a six-part series. You can find the previous posts below.

Time is Money, Part 1: Vulnerability Management Maturity Levels

Time is Money, Part 2: Vulnerability Analysis

Time is Money, Part 3: Vulnerability Assignment

Time is Money, Part 4: Fixing Vulnerabilities

Validation is an often overlooked step in the vulnerability management process. It’s tempting to scan for vulnerabilities, identify the most important ones and ram the fix through as quickly as possible so we can get back to doing things that are actually important to us. However, there are a few reasons why we might not want to ‘fire-and-forget’ when it comes to patching.

False positives – the patch may not be missing or needed in the first place

False negatives – vulnerability scans can fail to identify missing patches

There are a number of different ways we can validate patches, at different levels. One approach is simply to run a vulnerability check. Depending on how this vulnerability check is performed, it could be a weak or reliable source of validation. An external service banner or version check can be weak, as security patches are often ‘backported’, meaning that the vulnerability was fixed, but the version numbers stay the same.

An authenticated scan is better, as the vulnerability check can use version information from an operating system software registry, which is generally more reliable. The most reliable validation, however, is when it’s possible to check files directly or through a software agent installed on the host; or attempting to exploit the vulnerability directly. Not all vulnerabilities can be exploited safely, so the latter approach must be done carefully.

NopSec has a method for validating both vulnerabilities and patches. As mentioned in Time is Money Part 2, vulnerability scanners sometimes make faulty guesses. E3 Engine, which is part of Unified VRM, can not only test vulnerabilities during the analysis phase, but also during the validation phase to ensure the patch really has resolved the vulnerability.

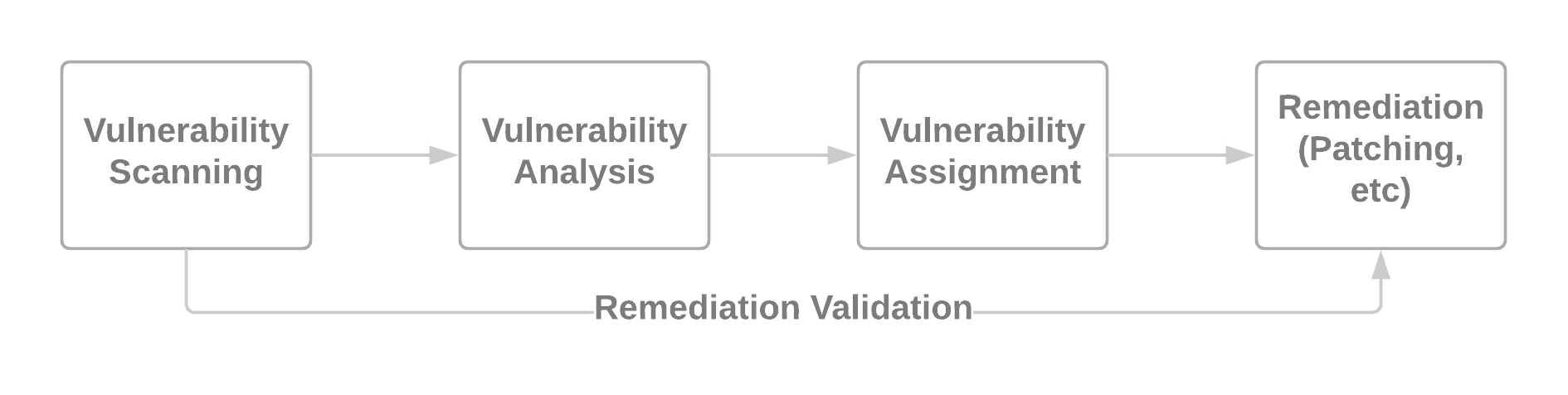

We must also consider the environment and existing patch workflow to properly validate fixes as well. In Time is Money Part 2, we provided a common vulnerability management workflow.

Simplified Vulnerability Management Process

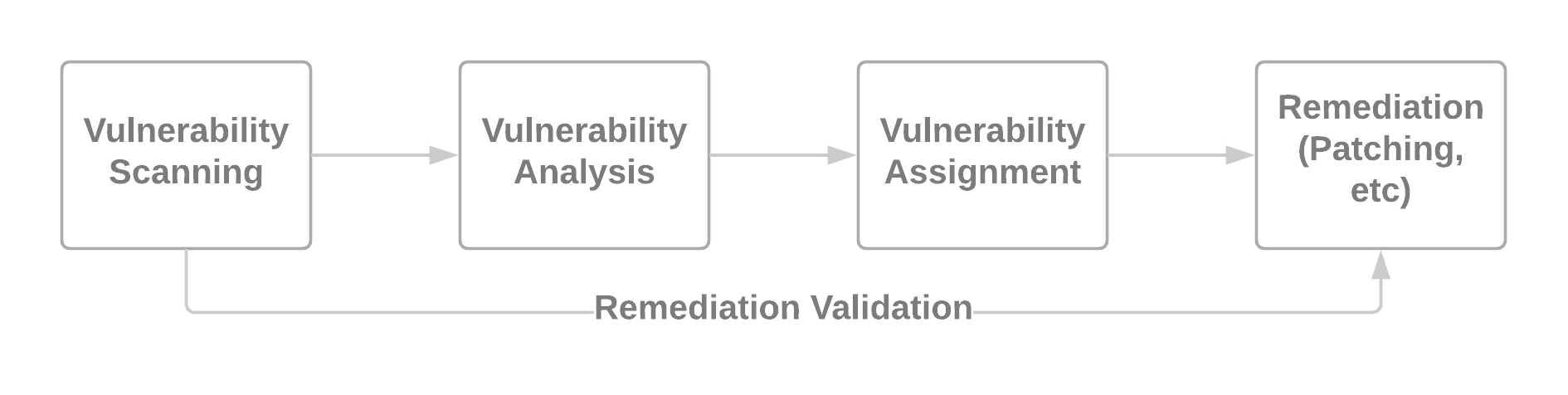

However, not all patching workflows begin with vulnerability scans and some environments are trickier to navigate due to mitigating controls. These mitigating controls could include security products such as web application firewalls (WAFs) or host intrusion prevention software (HIPS). Consider the following alternative workflows.

Diving deeper into workflow 1, above, if mitigating controls are common in an environment, using exploits to validate patches may not make sense and will result in false positives. While a mitigating control may stop an attack while it’s functioning, a sophisticated adversary may realize these controls are in play and find a way to disable or bypass them. The principle of defense in depth advises us not to rely too heavily on any single layer of defense. In this case, we should interpret this to mean that we should patch and put mitigating controls in place – not just one or the other.

The next post in the series is the sixth and final post, which will take a step back and look at calculating how much time we can actually save with all the manual processes we’ve automated and all the additional efficiency we’ve gained.