Verizon DBIR: Analysis of Verizon DBIR Report

- May 21, 2020

- Michelangelo Sidagni

It is that time of the year again! The Verizon 2020 DBIR report is out again – https://enterprise.verizon.com/resources/reports/dbir/ – and most of the information security industry is busy reading and analyzing it cover to cover.

As far as I am concerned, I am no exception. Particularly, I found this year’s report very well written and insightful. I found it very compelling that right at the beginning of the report the writers made a clear disclaimer about every statistical analysis’ “sample bias” – the fact that the data sample selection and filters somewhat influence the result of the analysis. This clear disclaimer should be disclosed in every statistical report that could claim to be “scientific”.

I am sure all my infosec colleagues analyzed the report cover-to-cover and more specifically from the incident response and intrusion detection perspective. This year I would like to focus my analysis on the report’s implications for an organization’s vulnerability management program.

Right from the introduction, the report’s writers quote Oscar Wilde’s “The Picture of Dorian Gray” to highlight the major finding of this report: the fact that there is an increase of security incidents which can be directly attributed to human errors, as opposed to deliberate actions of motivated attackers. – “Experience is merely the name men gave to their mistakes”.

In terms of tactics, while “Hacking” techniques are still widely used and growing, human errors and system misconfigurations are growing in number and relevant from the security incident standpoint. In third position the “social engineering” technique is another relevant attack vector that leads to security breaches. On the other hand, the Malware and C2 “infection” techniques are in a distant fourth place, at only 17%.

These data points are relevant from the vulnerability management standpoint, because it indicates that attackers could take advantage of both unpatched and misconfigured systems to carry out their intrusions. Therefore, system security configuration management and operating system hardening are back to being important from the breach prevention standpoint, as opposed to just having a well oiled patch management process.

Social engineering, in the form of “spear phishing” is mainly used to trick users into clicking on a link, leading to an attacker’s controlled website to gather valid credentials or to install RATs – Remote Access Trojans – malware downloaders or other types of C2 backdoors. The “malware” document in attachment to the email is still a used technique but it is in decline.

The fact that the use of “malware” exploitation is in decline, at least in the initial phases of exploitation, is a testimony to the fact that the deployed security controls in organizations are actually working:

The only one form of malware that continues to grow and be effective is actually the “ransomware” kind, which continues to be wide-spread, despite the fact that vulnerabilities that allow it to spread continue to be patched.

In terms of actors, the external financially-motivated actors are responsible for most of the analyzed security breaches. This points to the importance of making sure that systems that are Internet-exposed should be continually patched and securely configured to avoid security escalations and “deep” breaches involving also the organization’s internal networks. The report highlights that breaches involving an internal actor are less common. However, the importance of “lateral movement” within the organization’s internal network should not be underestimated.

In terms of manual “Hacking” techniques and attack vectors, it looks like brute-forcing and use of stolen credentials is still the number one technique for attackers to gain a foothold on compromise systems. At a distant second place, the exploitation of existing unpatched vulnerabilities is still in use but much less than in the past indicating that existing vulnerabilities with a CVE are being patched at an increased rate. In third and sixth position respectively, there are the use of C2 and backdoor tools using the gained access credentials mentioned before and the use of web application SQL injection vulnerabilities to gain a foothold into the systems.

In terms of relevant “Hacking” vectors, web application’s exploitation is still the number one attack vector, pointing to the importance of web application vulnerability management and DevSecOps practices to fix web application vulnerabilities. Furthermore, it is important to highlight the “full stack” approach to vulnerability management, including in one “pane of glass” both infrastructure and web application code vulnerabilities and remediation strategies.

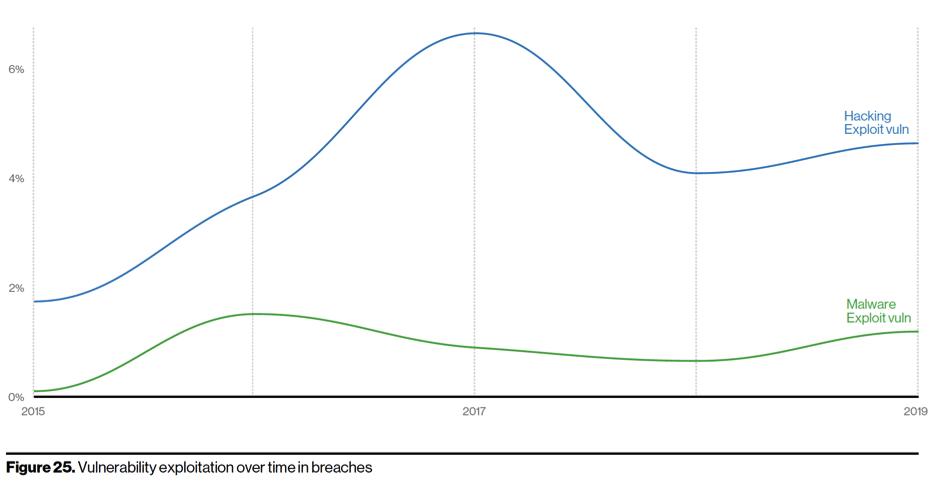

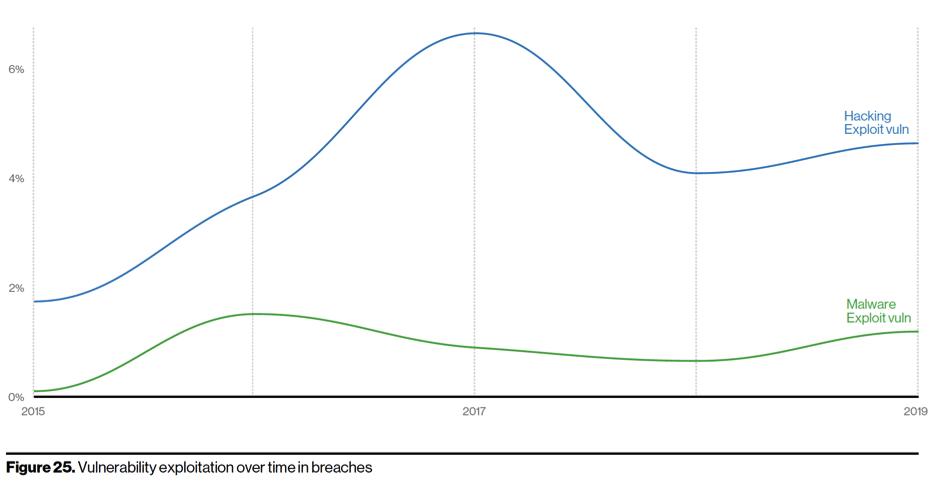

In terms of vulnerability exploitation, it is clear from the report’s chart above that the manual vulnerability exploitation continues to increase while the malware vulnerability exploitation remains relatively stable. This is due to the fact that security controls got smarter in detecting and blocking malware and vulnerability exploitation within malware.

The other vulnerability management “gem” in the report is this one: “There are lots of

vulnerabilities discovered, and lots of vulnerabilities found by organizations scanning and patching, but a relatively small percentage of them are used in breaches”. An attacker would try a vulnerability exploitation as a low-hanging fruit but using gathered valid credentials is the preferred access method. “Clearly, the attackers are out there and if you leave unpatched stuff on the internet, they’ll find it and add it to their infrastructure”.

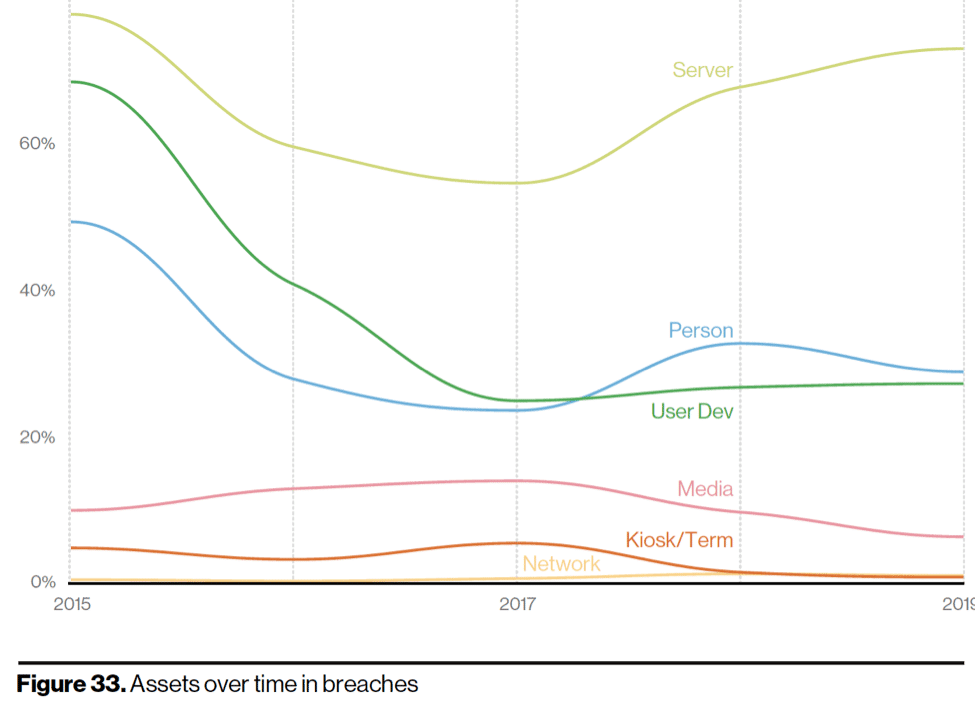

There is also a lot of relevance given to new vulnerabilities and the hype and publicity that comes with them. The report asks itself whether new vulnerabilities makes the Internet a more vulnerable place. The answer here is revealing: “Organizations that patch seem to be able to maintain a good, prioritized patch management regime. Attackers will try easy-to-exploit vulnerabilities if they encounter them while driving around the internet”. In other words, organizations that maintain a good vulnerability, configuration and patch management program on an ongoing basis should not worry about new vulnerabilities too much, because they will be able to patch them as a routine”. However, in terms of asset management, organizations should worry about vulnerabilities found in uncataloged and non-inventoried assets that they do not even know the existence of. To quote the report: “That suggests that the vulnerabilities are likely not the result of consistent vulnerability management applied slowly, but a lack of asset management instead”.

In terms of asset management, based on the report chart above, the server category is still the most relevant category of exploited assets in security breaches. Of those exploited servers, most relevant categories are web application, mail and databases servers pointing again to the importance of a “full-stack” vulnerability management approach.

A brief consideration about “cloud” security breaches: the report mentions that “cloud” security breaches are involved in 24% of the total breaches and that 73% of those cloud breaches involve email and web applications. Furthermore, 77% of the cloud breaches involve stolen cloud credentials. Information disclosure via an open “cloud” bucket is included in the “human” error category and presumably it is what makes this category so relevant in terms of security incident vectors.

And to wrap up this commentary these are few additional highlights and comments:

Overall, I liked this year’s Verizon DBIR report. The most mind-blowing finding in it for me was that the most relevant attack vector for external financially-motivated breaches is still human error and misconfigurations and valid credential use, as opposed to vulnerability exploitation, especially in regulated industry verticals such as financials/insurance and healthcare. This points to focus on security configuration management and configuration quality control as much as to security patch management.

And I thought it was 2020 in terms of the organization’s security maturity!!